Ivan Smirnov·hackernoon.com·· 3 min read

LLMs Get Smart: How System Prompts Unlock Better Instruction-following

frontend intermediate

TL;DR

LLMs just got a lot smarter, thanks to Google's revelation of system prompts' inner workings.

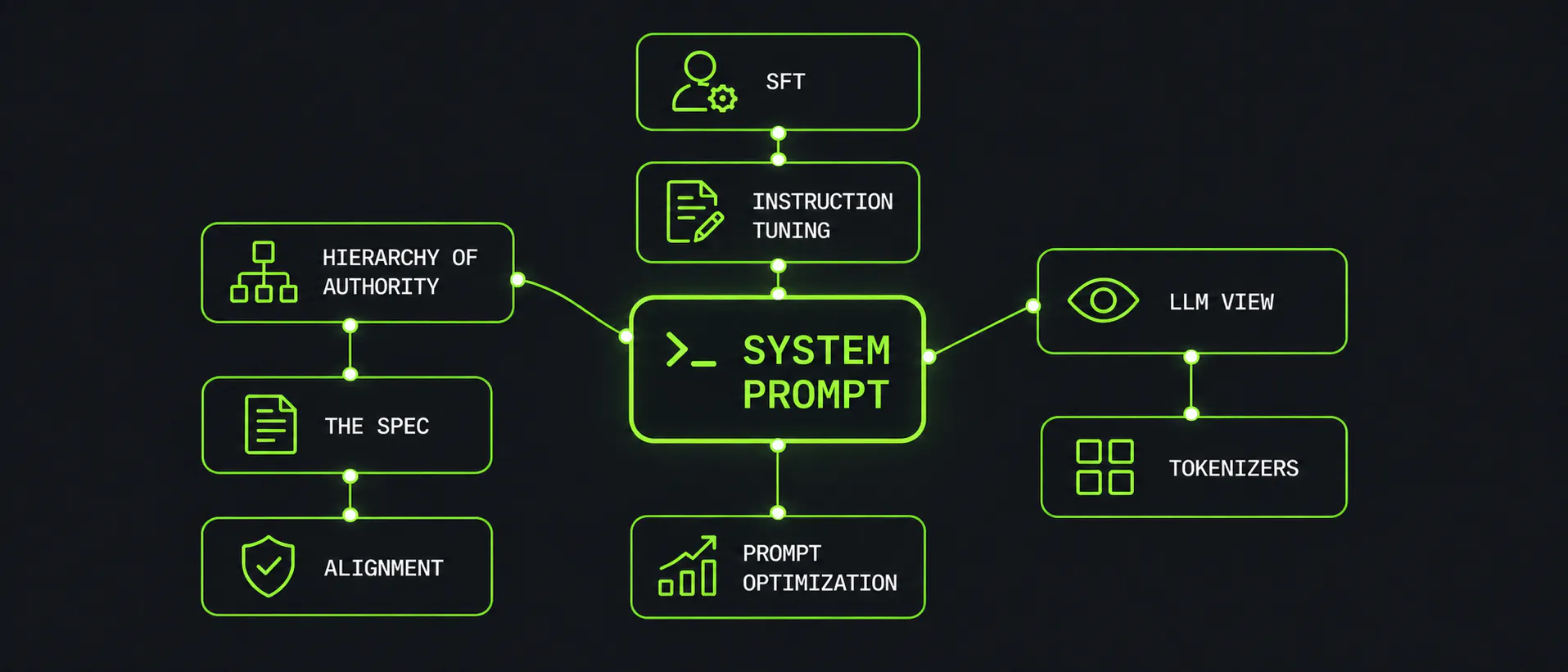

Google's Large Language Models (LLMs) just got a whole lot smarter - literally. The tech giant has revealed the inner workings of system prompts, which dictate how LLMs behave, use tools, and follow policies. This breakthrough means developers can now write better prompts, evaluate them systematically, and reduce security risks like jailbreaks and prompt injection.

Key Takeaways

- •Evaluate your prompts: Use this new understanding to ensure your LLMs are following instructions correctly

- •Write smarter prompts: Develop prompts that take into account how LLMs learn from system prompts

- •Secure your LLMs: Implement measures to prevent security risks like jailbreaks and prompt injection

llmsnatural language processingsystem prompts

High Quality Source

Originally published by Ivan Smirnov on hackernoon.com. Summarized by ContentBuffer.

Comments

Subscribe to join the conversation...

Be the first to comment

Enjoyed this article?

Get it daily. 7am. Free. Reads in 5 minutes.