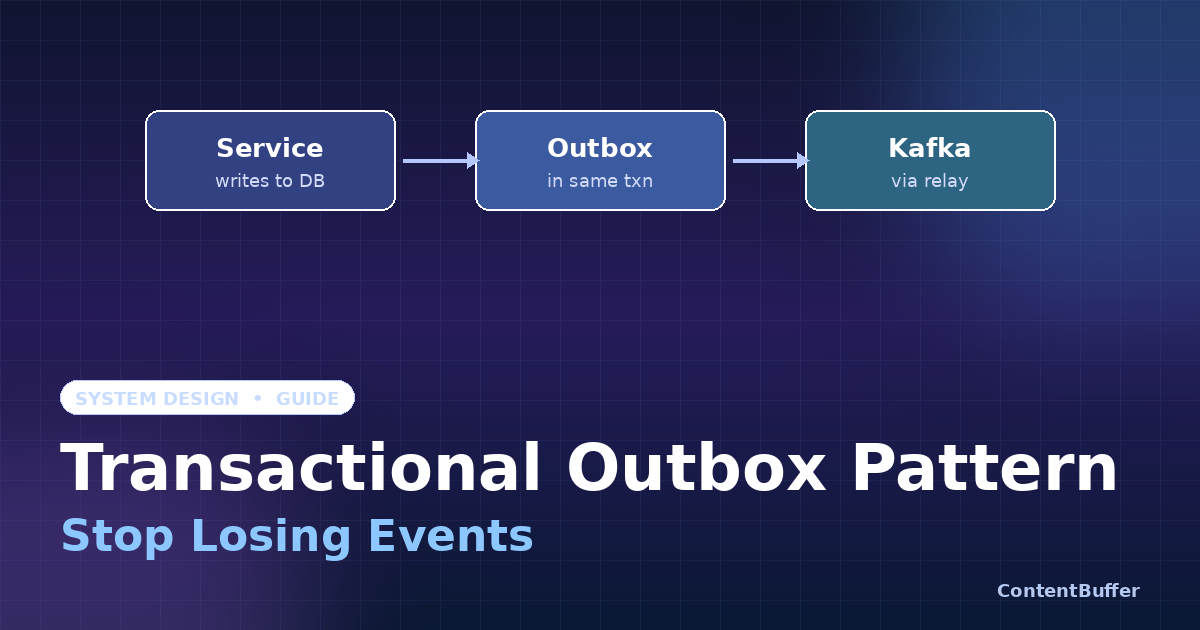

Transactional Outbox Pattern: Stop Losing Events

Summary

Guarantee events publish even when Kafka is down. Outbox pattern with Postgres, step-by-step.

Why your events keep disappearing

You wrap a database write and a Kafka publish in the same function. The DB succeeds, then Kafka times out — or the reverse. Either way you now have an inconsistent system: an order exists with no OrderCreated event, or an event fired for a row that was never committed. This is the dual-write problem, and it shows up in every microservices stack the moment two pieces of state need to stay in sync.

The Transactional Outbox pattern fixes this by reducing the problem to one durable write. Instead of writing to two systems, you write your business row and your event to the same database, in the same transaction. A separate relay process ships the event to Kafka after the fact. If Kafka is down, the event waits. If your app crashes, the event waits. No more lost messages, no distributed transactions, no XA.

This guide walks through a production-grade outbox in PostgreSQL — schema, code, relay loop, and the four mistakes that cost teams a weekend each. By the end you can paste this into a service today.

Prerequisites

- PostgreSQL 13+ (we'll use

SKIP LOCKED) or any RDBMS with row-level locks - Kafka, RabbitMQ, SNS, or any message broker — the pattern is broker-agnostic

- Comfortable with SQL transactions and at least one backend language (we'll use Python; Node and Go versions are trivial)

- Basic familiarity with at-least-once delivery semantics

Step 1: Understand the dual-write trap

Here's the broken code that ships in nearly every greenfield service:

# BROKEN: dual write

def create_order(order):

db.insert("orders", order) # 1

kafka.publish("orders.created", order) # 2

return orderThree failure modes:

- Step 1 commits, step 2 throws — order exists, no event. Downstream services (inventory, billing, search) never hear about it.

- Step 2 publishes, step 1 rolls back later (e.g., a constraint check) — phantom event for a row that doesn't exist.

- The process crashes between 1 and 2 — same as case 1, but harder to detect.

Wrapping both in a try/except doesn't help — there is no atomic primitive that spans Postgres and Kafka. XA transactions exist but they are slow, fragile, and most managed brokers don't support them.

Step 2: Add an outbox table

The outbox is just another table in your service's database. Every event you want to publish gets inserted there — in the same transaction as the business write.

CREATE TABLE outbox (

id BIGSERIAL PRIMARY KEY,

aggregate_id TEXT NOT NULL, -- e.g. order id

aggregate TEXT NOT NULL, -- "order", "user", ...

event_type TEXT NOT NULL, -- "OrderCreated"

payload JSONB NOT NULL,

created_at TIMESTAMPTZ NOT NULL DEFAULT now(),

published_at TIMESTAMPTZ, -- NULL until relayed

attempts INT NOT NULL DEFAULT 0

);

-- The relay only cares about unpublished rows.

CREATE INDEX outbox_unpublished_idx

ON outbox (created_at)

WHERE published_at IS NULL;A few design notes baked into that schema:

aggregate_id+aggregatelet consumers route or partition by entity. Kafka producers will useaggregate_idas the message key so events for the same order land on the same partition in order.payloadis JSONB so you can evolve the event shape without migrations.- The partial index on

published_at IS NULLkeeps the relay query fast even when the table grows to millions of rows — the published rows fall out of the index entirely. - We keep published rows around for audit and replay; a nightly job archives anything older than N days.

Step 3: Write business + event in one transaction

Your service code now looks like this. Notice that nothing talks to Kafka — that's the whole point.

# Correct: single transaction, no broker call

def create_order(order, conn):

with conn.transaction():

conn.execute(

"INSERT INTO orders (id, customer_id, total, status) "

"VALUES (%s, %s, %s, 'PENDING')",

(order.id, order.customer_id, order.total),

)

conn.execute(

"INSERT INTO outbox "

" (aggregate_id, aggregate, event_type, payload) "

"VALUES (%s, 'order', 'OrderCreated', %s::jsonb)",

(order.id, json.dumps(order.to_event())),

)

return order # both rows committed, or neitherPostgres guarantees both inserts succeed together or fail together. There is no broker call in the request path — your endpoint stays fast and stops depending on Kafka's availability for correctness.

Step 4: Build the relay (poller version)

The relay is a small worker that reads unpublished rows, sends them to Kafka, and marks them published. The trick is to do this safely across multiple relay instances — you'll run more than one for HA.

# relay.py — runs as N replicas in your cluster

import json, time, psycopg

from confluent_kafka import Producer

producer = Producer({"bootstrap.servers": "kafka:9092",

"enable.idempotence": True,

"acks": "all"})

BATCH = 100

SLEEP = 0.2

def relay_loop(conn):

while True:

with conn.transaction():

rows = conn.execute(

# SKIP LOCKED is the magic — replicas never fight over rows.

f"""SELECT id, aggregate_id, event_type, payload

FROM outbox

WHERE published_at IS NULL

ORDER BY id

LIMIT {BATCH}

FOR UPDATE SKIP LOCKED"""

).fetchall()

if not rows:

time.sleep(SLEEP); continue

for r in rows:

producer.produce(

topic = "orders.created",

key = r["aggregate_id"].encode(),

value = json.dumps(r["payload"]).encode(),

headers = [("event_type", r["event_type"].encode()),

("outbox_id", str(r["id"]).encode())],

)

producer.flush(timeout=5) # block until acked or fail

ids = [r["id"] for r in rows]

conn.execute(

"UPDATE outbox SET published_at = now() WHERE id = ANY(%s)",

(ids,)

)

# transaction commits, locks releaseThree things make this safe under concurrency:

FOR UPDATE SKIP LOCKED— each relay grabs a different batch. No coordination, no Redis lock, no leader election.producer.flush()blocks until Kafka acks. If it raises, the transaction rolls back and the rows staypublished_at = NULLfor the next attempt.enable.idempotence=True+acks=all— Kafka itself dedupes producer retries, so consumers see each event at-least-once but never out of order within a partition.

Step 5: The CDC alternative (Debezium)

Polling works, but it puts query load on your primary DB and has ~200ms tail latency. The other option is Change Data Capture: point Debezium at the Postgres write-ahead log, watch the outbox table for inserts, and stream them straight to Kafka.

Architecturally it's the same pattern — same table, same atomic write — but the relay is replaced by a Kafka Connect worker reading the WAL. You get sub-50ms latency and zero query load on your DB. The trade-off is operational: you now run Debezium, manage replication slots, and care about WAL retention.

Rule of thumb: start with the polling relay. It's 80 lines of code and you can run it tomorrow. Move to Debezium when polling latency or DB load starts to hurt.

Common pitfalls (the ones that cost a weekend)

1. Forgetting the partial index. Without WHERE published_at IS NULL on the index, the relay's SELECT ... ORDER BY id turns into a sequential scan once you're past a few million rows. The relay falls behind, the outbox grows, the index gets even slower — death spiral. Always include the partial index from day one.

2. Letting the outbox grow forever. Even with the partial index, VACUUM has to walk the whole table. Run a daily job that deletes (or archives to cold storage) rows where published_at < now() - interval '7 days'. Keep enough history for replay; not enough to wreck autovacuum.

3. Treating consumers like exactly-once. The outbox guarantees at-least-once delivery, not exactly-once. A relay can publish a row, crash before UPDATE published_at, and a sibling relay republishes the same event. Consumers must be idempotent — typically by deduping on outbox_id in a header, or on a natural business key.

4. Holding the transaction open too long. Every relay batch holds row locks until the Kafka flush completes. If Kafka is slow, those locks pile up and block writes from your service. Mitigation: set a short request.timeout.ms on the producer, keep batches small (50-200 rows), and put a hard timeout on the entire transaction. Better one slow batch retried than your API going read-only because the relay is wedged.

Quick reference

| Concern | What to do |

|---|---|

| Atomicity | INSERT business row + INSERT outbox row in one DB transaction |

| Concurrency | FOR UPDATE SKIP LOCKED — multiple relays, no coordination |

| Ordering | Use aggregate_id as Kafka message key; one partition per aggregate |

| Throughput | Batches of 50-200 rows; tune by p99 publish latency, not throughput |

| Cleanup | Daily job: delete WHERE published_at < now() - interval '7 days' |

| Latency budget | Polling: ~200ms. Debezium/CDC: ~50ms. Both at-least-once. |

| Consumer contract | Idempotent on outbox_id header or business key — always |

Next steps

Three places to take this further once the basic pattern is humming:

- Saga orchestration. Once your services emit reliable events, you can chain them into multi-step business workflows with compensation steps. The outbox is the foundation; saga is the choreography on top.

- Event sourcing. If you find yourself writing every state change as both a row mutation and an outbox event, consider going further — store the events as the source of truth and project the orders table from them.

- Schema registry. Outbox payloads are JSONB today. Lock them down with Avro or Protobuf in a schema registry before consumers start to drift. Future-you will be grateful.

That's the whole pattern. Two tables, one transaction, a 60-line relay. It is the cheapest reliability win in distributed systems — apply it before you reach for sagas, eventual consistency frameworks, or distributed transactions.

Comments

Be the first to comment