How to Use Meta Muse Spark: Free AI That Thinks

Summary

Step-by-step tutorial for Meta's new Muse Spark multimodal AI model

Meta just released Muse Spark on April 8, 2026—a free, multimodal AI model that reasons through problems in two distinct modes. Unlike previous models that rely solely on pattern matching, Muse Spark can "think" through complex queries, making it genuinely useful for work and creativity.

This guide shows you how to access it, use both thinking modes, and leverage its unique features like shopping assistance and multi-agent reasoning.

1. What We're Building

By the end of this tutorial, you'll be able to:

- Access Muse Spark on Meta AI, mobile apps, and glasses

- Use Instant mode for quick answers

- Activate Thinking mode for complex problems

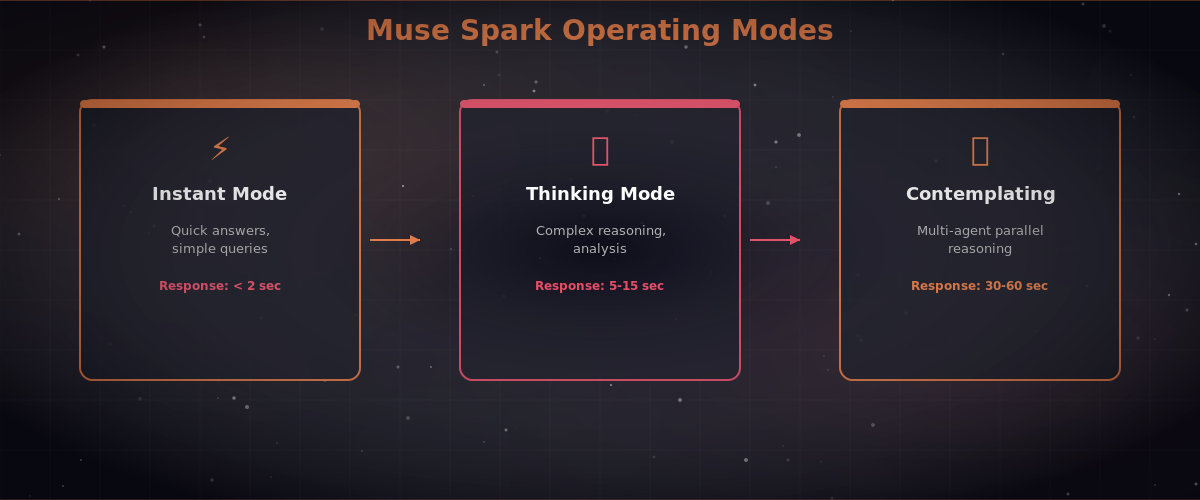

- Prepare for Contemplating mode (multi-agent parallel reasoning, coming soon)

- Use the built-in Shopping mode to find clothes and design rooms

- Understand when to use Muse Spark vs. other AI tools

2. What You Need

- A Meta account (Facebook or Instagram login)

- US-based access (currently available in the US only)

- One of these devices/platforms:

A web browser (for meta.ai)Meta AI mobile app (iOS/Android)WhatsApp, Instagram, Messenger, or Facebook (Muse Spark is rolling out)Meta AI glasses (Ray-Ban collaboration, rolling out)

- A web browser (for meta.ai)

- Meta AI mobile app (iOS/Android)

- WhatsApp, Instagram, Messenger, or Facebook (Muse Spark is rolling out)

- Meta AI glasses (Ray-Ban collaboration, rolling out)

- 5 minutes to set up and test

About Muse Spark's design: It's built to be small and fast—requiring about 10x less compute than Llama 4 Maverick. This means faster responses and lower latency, even though it's competitive with GPT 5.4, Opus 4.6, and Gemini 3.1 Pro on complex benchmarks.

3. Step-by-Step Build

Step 1: Access Muse Spark on meta.ai

- Open your web browser and go to meta.ai

- Click "Log In" in the top-right corner

- Select "Continue with Facebook" or "Continue with Instagram"

- Enter your credentials

- Grant permission to access your Meta account

- You'll see the Muse Spark chat interface with two mode buttons: Instant (lightning bolt icon)Thinking (brain icon)

Expected: You're now logged in and see a chat window with a text input box at the bottom.

Step 2: Use Instant Mode for Quick Questions

Instant mode is perfect for straightforward queries that need fast answers.

Try this:

- Make sure the Instant button is selected (default)

- In the text box, type: What are the 5 best ways to learn Python in 2026?

- Press Enter

Expected output: Muse Spark returns a list like this:

- Interactive coding platforms (Codecademy, HackerRank)

- Project-based learning (build a Discord bot, web scraper)

- AI-assisted tutorials (use Muse Spark itself to explain concepts)

- Community forums (Stack Overflow, r/learnprogramming)

- Video courses (YouTube, Udemy—updated for 2026 Python standards)

The response comes in seconds. Instant mode is ideal for facts, summaries, and creative brainstorming.

Step 3: Activate Thinking Mode for Complex Reasoning

Thinking mode is where Muse Spark shines. It internally "thinks" through multi-step problems before responding—similar to how OpenAI's o1 works.

Try this:

- Click the Thinking button (brain icon)

- Type: I have $5,000 to invest and 10 years until retirement. I'm risk-averse but need growth. Should I use index funds, bonds, or a mix? Walk me through the math.

- Press Enter

Expected output: Muse Spark pauses for 10-15 seconds (you'll see "Thinking..."), then returns a detailed response like:

- Break-even analysis: 70% index funds / 30% bonds typically returns 7-8% annually with moderate volatility

- A decade of compound growth calculation (showing ~$9,700-$10,400 ending balance)

- Risk assessment specific to your profile

- Disclaimer: not financial advice, consult an advisor

You'll notice the response is more thorough, with explicit reasoning shown.

Another Thinking mode example:

- Prompt: Describe a novel plot twist that hasn't been done before in sci-fi. Include character motivations and how it reframes the story.

- Expected: A unique, detailed plot with internal logic—not just a generic twist.

Step 4: Input Multiple Modalities (Voice, Image, Text)

Muse Spark accepts voice, text, and images as input.

To use voice:

- Look for the microphone icon next to the text input

- Click it and speak your question naturally

- Muse Spark transcribes and processes it

Example: Say aloud, "Show me how to style a minimalist bedroom," and it processes the voice input as if you typed it.

To use images:

- Click the image/attachment icon (paperclip or photo icon)

- Upload or take a photo (e.g., a blurry handwritten note, a room you want to redesign, a fashion item)

- Ask a follow-up question: What colors would match this couch?

Expected: Muse Spark analyzes the image and provides visual suggestions.

Step 5: Try Shopping Mode

Muse Spark includes a built-in Shopping mode for styling and decoration.

To use it:

- Say or type: Help me find outfits for a summer beach vacation

- Muse Spark shows clickable product recommendations (clothes, accessories)

- Click any item to see details or buy directly from integrated retailers

- For home design: "Redesign my living room in a Scandinavian style" → Muse Spark suggests furniture and decor with direct purchase links

Expected output: A curated mood board with shoppable items, prices, and links to buy.

Step 6: Prepare for Contemplating Mode (Coming Soon)

Contemplating mode—launching later in 2026—will use multi-agent parallel reasoning: multiple reasoning agents tackle the same problem simultaneously, then synthesize their conclusions.

For now, you can't access it, but you'll see it announced on meta.ai or in the Meta AI app.

4. Test It / Run It

Verification Checklist

- You've logged into meta.ai with your Meta account

- You've sent at least one message in Instant mode and got a response in <5 seconds

- You've sent at least one message in Thinking mode and watched it "think"

- You've tested voice input (spoke a question and saw it transcribed)

- You've uploaded an image and received feedback on it

- You've tried Shopping mode and seen product recommendations

- You confirmed Muse Spark is available in your region (US for now)

Quick Benchmark Test

Try this advanced prompt to see Muse Spark's capabilities:

Prompt: Code a Python function that finds all prime numbers up to 1 million, but optimizes for memory. Explain the algorithm's time complexity.

Instant mode: Returns code but may skip optimization reasoning.

Thinking mode: Pauses, then explains the Sieve of Eratosthenes algorithm, shows optimized code, and provides Big O complexity analysis.

This shows the difference between quick answers and reasoned answers.

5. Next Steps

- Explore use cases: Try Muse Spark on your real problems—work emails, creative writing, code debugging, design decisions

- Compare modes: Log which tasks benefit from Instant vs. Thinking to build intuition

- Check for app rollout: Muse Spark is rolling out to WhatsApp, Instagram, Messenger, and Facebook—check if it's available in your account yet

- Wait for Contemplating mode: Multi-agent reasoning will be powerful for very complex decisions; watch for announcements

- Understand the limits: Muse Spark is closed-source (unlike Meta's Llama models), so you can't run it locallyText output only (no image generation, unlike DALL-E or Midjourney)US only for now; international rollout expected mid-2026

Why Muse Spark Matters

Meta designed Muse Spark to be small, fast, and thoughtful. It runs on 10x less compute than competing models, meaning:

- Faster response times

- Lower cost (free for now)

- Potential for on-device deployment on glasses and phones

For most users, Thinking mode bridges the gap between quick AI assistants and reasoning systems, making complex problems solvable without paying per-query.

Comments

Be the first to comment